Owning the ageing process at the Wellcome Collection, London, for the Spectator, 11 March 2026

More than thirty contemporary artists — Anna Maria Maiolino, Tam Joseph and the like — contribute to Wellcome Collection’s latest exhibition, which asks what it’s like to age at a time of unparalleled longevity. But as often so happens at Wellcome’s exhibitions, it’s the ephemera that draw the eye first.

“These 2 men are the same age,” says a leaflet advertising Kellogg’s All-Bran breakfast cereal. “One has driving power — energy — the will to succeed. The other is listeless — tired all the time — it is an effort for him to plod through each day’s work.”

The point being, ageing is a process, not an event. It is as much a matter of style, and manners, and even morality, as it is a matter of medicine. Ageing is, to a not inconsiderable degree, something we do to ourselves, and something we do to each other.

In 1972 Simone de Beauvoir nailed this point beautifully in her still rather neglected book La Vieillesse (the American edition translated that as “Coming of Age”; a revealing cop-out). As she sets out to break the conspiracy of silence around old age — this ‘forbidden subject’, she calls it — she demands her readers use their imaginations and recognise themselves in the aged other.

“If we do not know what we are going to be,” she writes, “we cannot know what we are: let us recognise ourselves in this old man or in that old woman. It must be done if we are to take upon ourselves the entirety of our human state.”

Like those good fellows at Kellogg’s, curator Shamita Sharmacharja wants visitors to own their ageing process, and value the prospect — if not indeed the present reality — of old age.

It’s a Quixotic enterprise because, as Philip Roth once quipped, “In every calm and reasonable person there is hidden a second person scared witless about death,” and there are few reminders of death more terrifying than the one that confronts us in the mirror each morning. “About a year ago,” wrote the American food writer M.F.K. Fisher, “I suddenly realised that I could not face walking toward myself again in the morning because here is this strange, uncouth, ugly, kind of toad-like woman… long thin legs, long thin arms, and a shapeless little toad-like torso and this head at the top with great staring eyes. And I thought, Jesus, why do I have to do this?”

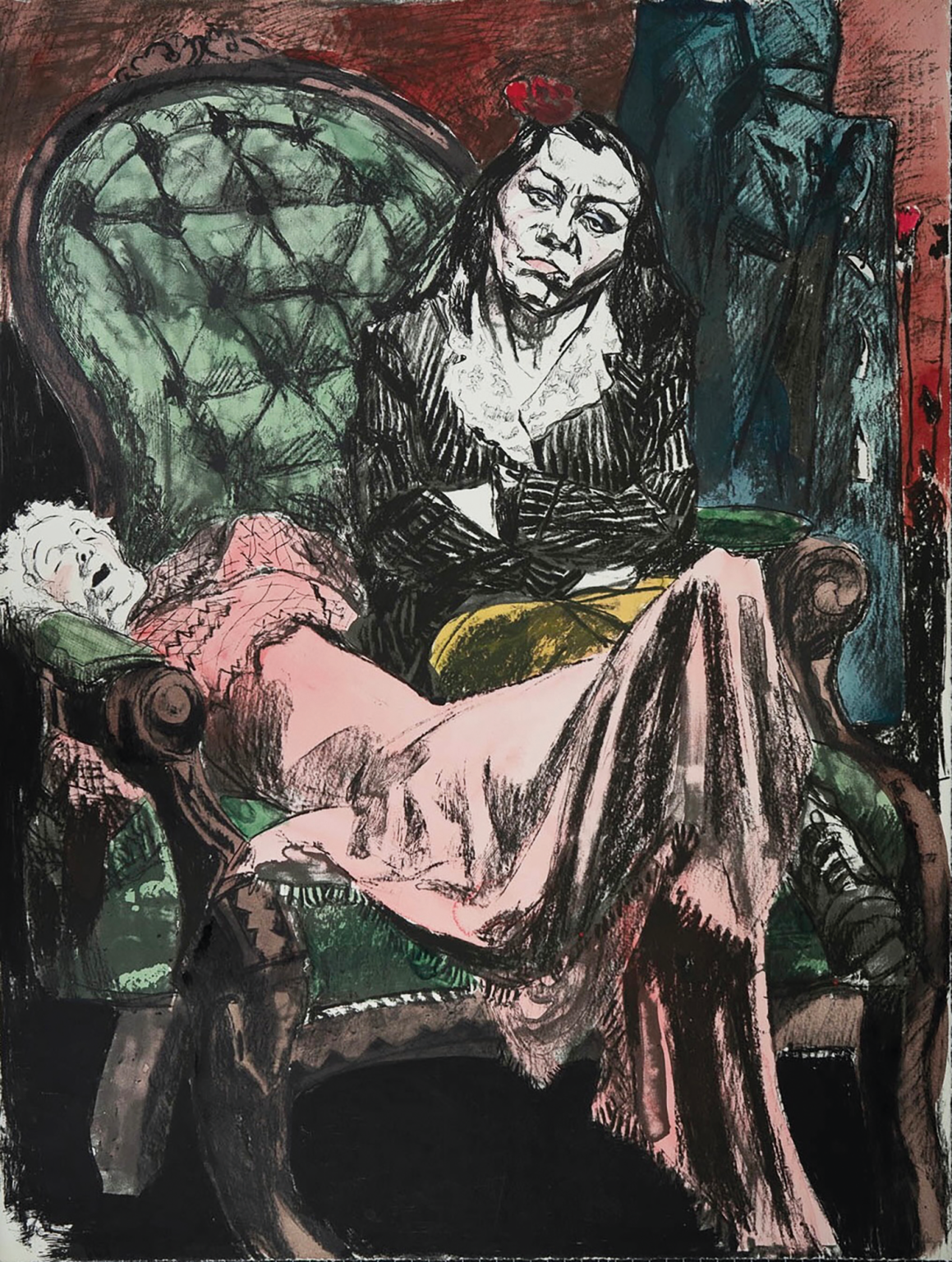

Shock and outrage find their visible expression at the Wellcome in Paula Rego’s bruised and twisted self-portraits, made in 2017 after she fell. (Awakened to our society’s ubiquitous ageism, I am absolutely *not* going to write “after she had a fall”).

William Utermohlen’s visual record of dementia is only marginally more muted: an achingly articulate series recording his steadily disintegrating impression of his own face.

How do you weave a worthwhile life-stage from such gloomy material? The older I get, the more I am struck by the profound wisdom of Eric Idle’s song at the end of Monty Python and the Holy Grail. Looking on the bright side of life are Kimiko Nishimoto and Shiro Oguni. Oguni’s Restaurant of Mistaken Orders — represented here by a few knick-nacks, aprons, menus — is a pop-up dining experience employing servers with dementia. By accepting from the outset that orders might be incorrect, the restaurant fosters patience, empathy, and joyful human connection over cold, technical perfection.

Nishimoto, meanwhile, began taking self-portraits in her seventies, using Photoshop to stage herself left out with the bins, or hanging from a washing line, or drifting serenely from a tree. The moral being, I suppose, that if life discards us at the end, we may as well have some fun with the idea.

Maija Tammi’s photographic print Immortal’s Birthday Party conjures up a space that’s part 18th-century Wunderkammer, half child’s bedroom, like the lair of a particularly naive Nosferatu. The trouble being, of course, that age is real, and there really is a moment when we have to put away childish things, and even middle-aged things, in time.

Why?

Well, look to The Way of Things — the world’s first (and, to everyone’s secret relief, last) verse-form book of popular science. In it the Epicurean philosopher Lucretius argued that it’s not just life that’s finite; life’s possibilities are finite, too. Yes, you can fall in love any number of times — but you can only fall in love for the first time once. By the time all gratifications have been experienced, living endlessly is futile. “Do you expect me to invent some new contrivance for your pleasure?” Nature sneers. Growing up and ageing always incurs a loss, but refusing to grow up — remaining perfectly innocent and untouched by the world — is an even greater loss. As J.M. Barrie understood, Peter Pan ultimately represents Death.

Am I arguing that, for all Sharmacharja’s efforts, I came away thinking that ageing is a uniformly bad idea?

Not at all. For women it’s much worse. (Or as that All-Bran leaflet has it: “These 2 women are the same age. One has the bloom of youth. The other is wrinkled, gray, careworn, far older than her years.”)

The depressing fact is, it takes more effort for women to camouflage their temporal nature. They’re the ones who bleed, who give birth, who feed infants, and who, at no great age, cease to be able to give birth.

Wellcome‘s approach to exhibition-making can get a bit modish. The desire to connect science to medicine, and medicine to society, and society to politics, and politics to technology, and back to science again, can induce — in this visitor, at any rate — the sort of dazed passivity I associate with getting dangerously drunk with anthropology graduates.

In this show, however, I think medium and message come together superbly. Ageing, in general, leads to old age, and old age leads to death, and death leads to terror, and terror leads to meanness, especially towards women, whose capacity for childbearing is so painfully and obviously truncated half way through life. No wonder women, just as much as men, judge other women harshly if (by dropping a tampon out of their handbag, say) they reveal their animal nature. (That’s a genuine psychological experiment, by the way — and a genuine result.)

Is this intimate connection between death-terror and misogyny in any way fair? Of course not. But as a fact of life it’s the very devil to get around. Serena Korda’s striking and powerful ceramic installation Wild Apples tries to reframe the menopause as a powerful moment of transformation, but how does it do this? By reclaiming, for hard-done by women, the archetype of the hag and the crone. So much for the escapist power of art.

The Coming of Age wants us to make that Beauvoir-esque imaginative leap and think our way into aged skin. We probably ought to give this a try. The world is getting older by the day; soon it will be getting emptier, as working-age adults find the costs of elder care so burdensome that they can’t afford to have children. I suppose we’d better make our peace with a rapidly ageing world, and make the best of it.

But let’s be frank here: empathy is a bore. And other responses to ageing are available.

The ancient Greeks (at least according to Hannah Arendt, in her 1958 book The Human Condition) developed a surprisingly hard-nosed view of ageing and death. They believed that being remembered was the nearest they could realistically come to immortality. So they revered fame, and the moral and physical excellence that would inculcate fame. Needless to say, they were vain to a fault.

Remember this, once it dawns on you that The Coming of Age is as much about sex, and fashion, and death, and our roles in society, as it is about its proper subject. People are like that around ageing, and have been for the longest time. Get them on the subject of ageing, and they will end up covering everything else under sun, in a frantic bid to evade thoughts of where ageing is leading them (and dress it up how you like, it’s nowhere pretty).

Evasion is the point. And meanwhile, and as it says on the T shirt (also part of the exhibition, and I desperately want one): “DON’T DIE.”