Exploring volumetric capture for New Scientist, 13 December 2017

OUTSIDE Dimension Studios in Wimbledon, south London, is one of those tiny wood-framed snack bars that served commercial travellers in the days before motorways. The hut is guarded by old shop dummies dressed in fishnet tights and pirate hats. If the UK made its own dilapidated version of Westworld, the cyborg rebellion would surely begin here.

Steve Jelley orders us breakfast. Years ago he left film production to pursue a career developing new media. He’s of the generation for whom the next big thing is always just around the corner. Most of them perished in the dot-com bust of 2001, but Jelley clung to the dream, and now Microsoft has come calling.

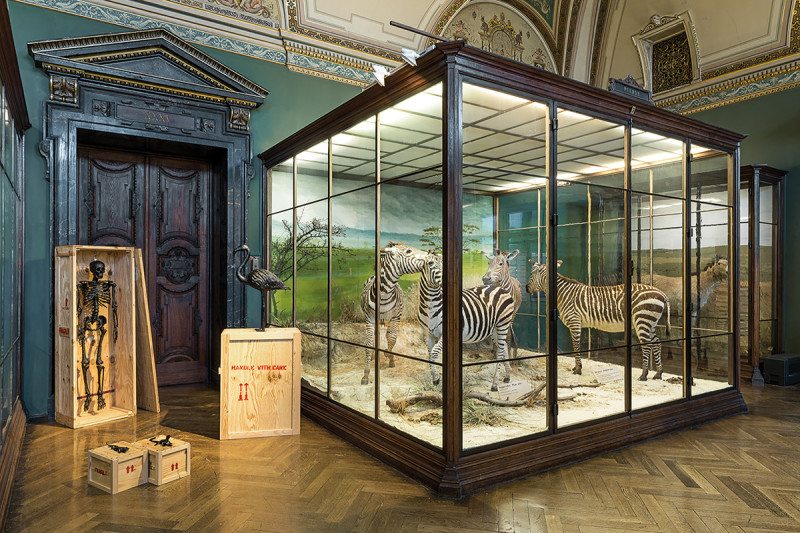

His company, Hammerhead, makes 360-degree videos for commercial clients. Its partner in this current venture, Timeslice Films, is best known for volumetric capture of still images – the business of cinematographically recording forms in three dimensions – a practice that goes back to founder Tim MacMillan’s art-school experiments of the early 1980s.

Steve Sullivan, director of the Holographic Video initiative at Microsoft, is fusing both companies’ technical expertise to create volumetric video: immersive entertainment that’s indistinguishable from reality.

There are only three studios in the world that can do this with any degree of conviction, and Wimbledon is the only one outside the US. Still, I’m sceptical. It has been clear for a while that truly immersive media won’t spring from a single “light-bulb” moment. The technologies involved are, in conceptual terms, surprisingly old. Volumetric capture is a good example.

MacMillan is considered the godfather of this tech, having invented the “bullet time” effect central to The Matrix. But The Matrix is 18 years old, and besides, MacMillan reckons that pioneer photographer Eadweard Muybridge got to the idea years before him – in fact, decades before cinema was invented.

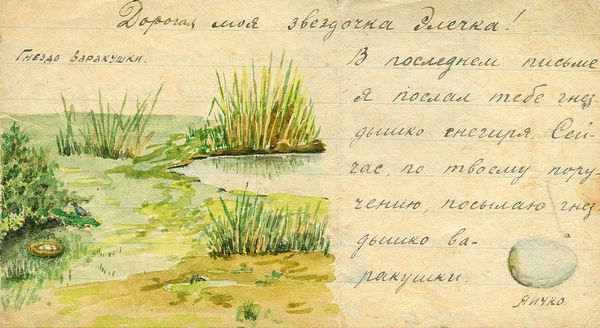

Then there’s motion capture (or mocap): recording the movement of points attached to an actor, and from those points, constructing the performance of a three-dimensional model. The pioneering Soviet physiologist Nikolai Bernstein invented the technique in the early 1920s, while developing training programmes for factory workers.

Truly immersive media will be achieved not through magic bullets, but through thugging – the application of ever more computer power, and the ever-faster processing of more and more data points. Impressive, but where’s the breakthrough?

“Well,” Jelley begins, handing me what may be the largest bacon sandwich in London, “you know this business of the ‘uncanny valley’…?” My heart sinks slightly.

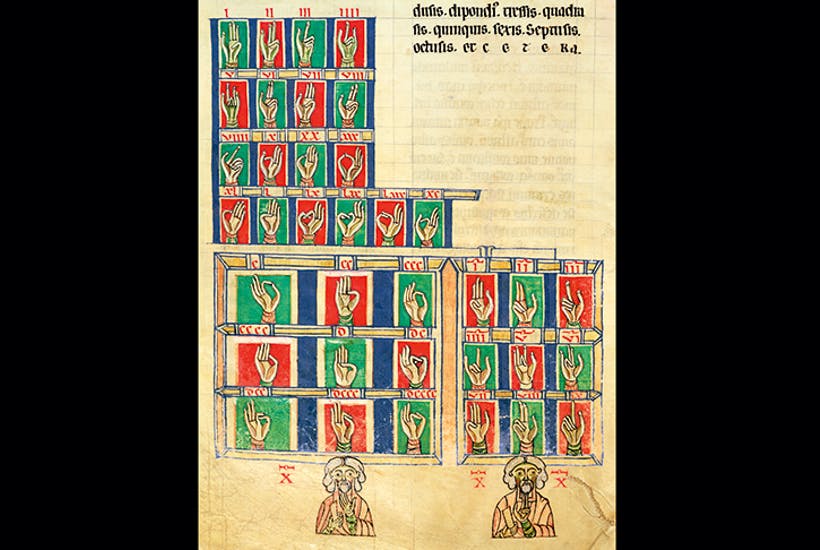

Most New Scientist readers will be familiar with Masahiro Mori’s concept of the uncanny valley. It’s a curiously anglophone obsession. In the 30 years since the Japanese engineer published his paper in 1970, it has been referred to in Japanese academic literature only once. Mori himself says the idea was never meant to be taken scientifically. He was merely warning robot designers at a time when humanoid robots didn’t exist that the closer their works came to resemble people, the creepier we would find them.

In the West, discussions of the uncanny valley have grown to a sizeable cottage industry. There have been expensive studies done with PET scans to prove the existence of the effect. But as Mori commented in an interview in 2012: “I think that the brainwaves act that way because we feel eerie. It still doesn’t explain why we feel eerie to begin with.”

Our discomfort extends beyond encounters with physical robots to include some cinematic experiences. Many are the animated movies that have employed mocap to achieve something like cinematic realism, only to plummet without trace into the valley.

Elsewhere, actor Andy Serkis famously uses mocap to transform himself into characters like Gollum in The Lord of the Rings, or the chimpanzee Caesar in Rise of the Planet of the Apes, and we are carried along well enough by these films. The one creature this technology can’t emulate, however, is Serkis himself. Though mocap now renders human body movement with impressive realism, the human face remains a machine far too complex to be seamlessly emulated even by the best system.

Jelley reckons he and his partners have “solved the problem” of the uncanny valley. He leads me into the studio. There’s a small, circular, curtained-off area – a sort of human-scale birdcage. Rings of lights and cameras are mounted on scaffolds and hang from a moveable and very heavy-looking ceiling rig.

There are 106 cameras: half of them recording in the infrared spectrum to capture depth information, half of them recording visible light. Plus, a number of ultraviolet cameras. “We use ultraviolet paint to mask areas for effects work,” Jelley explains, “so we record the UV spectrum, too. Basically we use every glimmer of light we can get short of asking you to swallow radium.”

The cameras shoot between 30 and 60 times a second. “We have a directional map of the configuration of those cameras, and we overlay that with a depth map that we’ve captured from the IR cameras. Then we can do all the pixel interpolation.”

This is a big step up from mocap. Volumetric video captures real-time depth information from surfaces themselves: there are no fluorescent sticky dots or sliced-through ping-pong balls attached to actors here. As far as the audience is concerned, volumetric video is essentially just that, video, and as close to a true record as anything piped through a basement full of computers is ever going to get.

So what kind of films are made in such studios? Right now, the education company Pearson is creating virtual consultations for trainee nurses. Fashion brands and car companies have shot adverts here. TV companies want to use them for fully immersive and interactive dramas.

On a table nearby, a demo is ready to watch on a Vive VR headset. There are three sets of performances for me to observe, all looping in a grey, gridded, unadorned virtual space: the digital future as a filing cabinet. There are two experiments from Sullivan’s early days at Microsoft. Thomas Jefferson is pure animatronic; the two Maori haka dancers are engaging, if unhuman. The circus gymnast swinging on her hoop is different. I recognise her, or think I do. My body-language must be giving the game away, because Jelley laughs.

“Go up to her,” he says. I can’t place where I’ve seen her before. I try and catch her eye. “Closer.”

I’m invading her space, and I’m not comfortable with this. I can see the individual threads, securing the sequins to her costume. More than that: I can smell her. I can feel the heat coming from her skin.

I know she’s not real, but my body doesn’t. Every bit of me that might have rejected a digitised face as uncanny has fallen hook, line and sinker for this super-real gymnast. And this, presumably, is why the bit of my mind that enables me to communicate freely and easily with my fellow humans is in overdrive, trying to plug the gaps in my experience, as if to say, “Of course her skin is hot. Of course she has a scent.”

Mori’s uncanny valley effect is not quantifiable, and I don’t suppose my experience is any more measurable than the one Mori identified. But I’d bet the farm that, had you scanned me, you would have seen all manner of pretty lights. This hasn’t been an eerie experience. Quite the reverse. It’s terrifyingly ordinary. Almost, I might say, human.

Jelley walks me back to the main road. Neither of us says a word. He knows what he has. He knows what he has done.

Outside the snack shack, three shop dummies in pirate gear wobble in the wind.