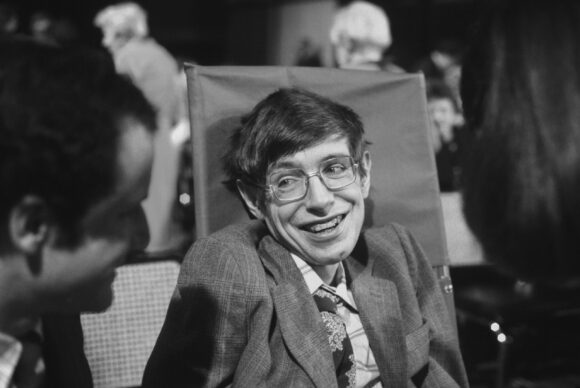

I could never muster much enthusiasm for the theoretical physicist Stephen Hawking. His work, on the early universe and the nature of spacetime, was Nobel-worthy, but those of us outside his narrow community were horribly short-changed. His 1988 global best-seller A Brief History of Time was incomprehensible, not because it was difficult, but because it was bad.

Nobody, naturally, wanted to ascribe Hawking’s popular success to his rare form of Motor Neurone Disease, Hawking least of all. He afforded us no room for horror or, God forbid, pity. In 1990, asked a dumb question about how his condition might have shaped his work (because people who suffer ruinous, debilitating illnesses acquire compensating superpowers, right?) Hawking played along: “I haven’t had to lecture or teach undergraduates, and I haven’t had to sit on tedious and time-consuming committees. So I have been able to devote myself completely to research.”

The truth — that Hawking was one of the worst popular communicators of his day — is as evident as it is unsayable. A Brief History of Time was incomprehensible because after nearly five years’ superhuman effort, the author proved incapable of composing a whole book unaided. He couldn’t even do mathematics the way most people do it, by doodling, since he’d already lost the use of his hands. He could not jot notes. He could not manipulate equations. He had to turn every problem he encountered into a species of geometry, just to be able to think about it. He held his own in an impossibly rarified profession for years, but the business of popular communication was beyond him. As was communication, in the end, according to Hawking’s late collaborator Andy Strominger: “You would talk about words per minute, and then it went to minutes per word, and then, you know, it just got slower and slower until it just sort of stopped.”

Hawking became, in the end, a computerised patchwork of hackneyed, pre-stored utterances and responses. Pull the string at his back and marvel. Charles Seife, a biographer braver than most, begins by staring down the puppet. His conceit is to tell Stephen Hawking’s story backwards, peeling back the layers of celebrity and incapacity to reveal the wounded human within.

It’s a tricksy idea that works so well, you wonder why no-one thought of it before (though ordering his material and his arguments in this way must have nearly killed the poor author).

Hawking’s greatest claim to fame is that he discovered things about black holes — still unobserved at that time — that set the two great schools of theoretical physics, quantum mechanics and relativity, at a fresh and astonishingly creative loggerheads.

But a new golden era of astronomical observation dawned almost immediately after, and A Brief History was badly outdated before it even hit the shelves. It couldn’t even get the date of the universe right.

It used to be that genius that outlived its moment could reinvent itself. When new-fangled endocrine science threw Ivan Pavlov’s Nobel-winning physiology into doubt, he reinvented himself as a psychologist (and not a bad one at that).

Today’s era of narrow specialism makes such a move almost impossible but, by way of intellectual compensation, there is always philosophy — a perennially popular field more or less wholly abandoned by professional philosophers. Images of the middle-aged scientific genius indulging its philosopause in book after book about science and art, science God, science and society and so on and so forth, may raise a wry smile, but work of real worth has come out of it.

Alas, even if Hawking had shown the slightest aptitude for philosophy (and he didn’t), he couldn’t possibly have composed it.

In our imaginations, Hawking is the cartoon embodiment of the scientific sage, effectively disembodied and above ordinary mortal concerns. In truth, life denied him a path to sagacity even as it steeped him in the spit and stew of physical being. Hawking’s libido never waned. So to hell with the philosopause! Bring on the dancing girls! Bring on the cheques, from Specsavers, BT, Jaguar, Paddy Power. (Hawking never had enough money: the care he needed was so intensive and difficult, a transatlantic air flight could set him back around a quarter of a million pounds). Bring on the billionaires with their fat cheques books (naifs, the lot of them, but decent enough, and generous to a fault). Bring on the countless opportunities to bloviate about subjects he didn’t understand, a sort of Prince Charles only without Charles’s efforts at warmth.

I find it impossible, having read Seife, not to see Hawking through the lens of Jacobean tragedy, warped and raging, unable even to stick a finger up at a world that could not — but much worse, *chose* not — to understand him. Of course he was a monster, and years too late, and through a book that will anger many, I have come to love him for it.