Reading Be Mine by Richard Ford for the Times, 22 June 2023

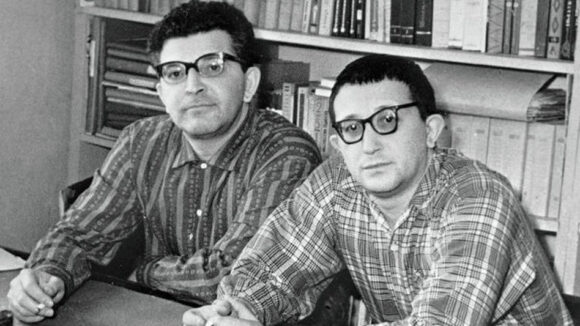

Move up there: Richard Ford is back again, and once again he’s got Frank with him, his wayward alter-ego.

Since this is Ford’s fifth exploration of the consciousness of sportswriter-turned-realtor Frank Bascombe, here’s a summary. (You don’t strictly need it; it’s not that sort of a series. But there’s no harm in being orientated.) As a young man in the late 1970s, Frank nursed big dreams. In time he learned to pack them away. He got married, had children, and watched one of them die — an event that, not too surprisingly, spelled the end of his relationship. He married again, not very successfully. He’s retired now and wedged comfortably, if bemusedly, in America’s post-retail uncanny, where nothing has any obvious relation to anything else — “The gravestone company that sells septics, the pet supply that offers burials at sea, the shoe store that sells baseball tickets”.

Frank Bascombe is an ordinary man, and this is the fifth instalment of his ordinary life.

Ford’s keenly observing, wise-cracking alter ego, seems on the face of it to be an unlikely focus for over three decades of dedicated effort. Frank has spent most of his life selling real estate. Before that he was a sports writer. He wanted to be the next Raymond Carver, once upon a time, but in his late thirties he decided to get a real job.

This is where Ford and Bascombe parted ways. Ford, too, once tried to get a real job — but wasn’t nearly as savvy as his alter-ego, and couldn’t make a dime outside of becoming a literary giant and our pre-eminent proponent of American realism.

Frank remembers reading that in good novels, “anything can follow anything, and nothing ever necessarily follows anything else.”

This is simply Ford removing the safety-net before embarking on his latest high-wire act. Of course there’s a plot. I’d go so far as to say that there’s a hero’s journey here, as Frank arranges one last trip for himself and his surviving son Paul, a long, flat, boring drive across South Dakota to Wyoming, and Rapid City, and — of all places — Mount Rushmore, “most notional of national monuments, and thus most American”.

Paul has been diagnosed with ALS, a neuro-degenerative condition that is uncoupling his muscles from his brain in something like real time as we read.

Our privileged access to the cockpit of Frank’s head comes at significant emotional cost. There’s no fire exit for us here — no chill-out space scattered with comfy abstractions, opinions or Fine Writing. We’re in for the long haul — Hartford, South Dakota — Mitchell, South Dakota —

Ford being Ford, of course, it all goes like the clappers, leaving us teary and exhilarated (reading Ford is really like getting laid).

For four volumes now, Frank has been learning to navigate the downpour of disconnected stuff that makes up his ordinary life (much of it in New Jersey), stringing eventoids together in ways that will carry meaning. This necessity, to turn one’s own life into a story and remain halfway sane thereby, hit 38-year-old Frank with the power of revelation back when he first appeared, in The Sportswriter, back in 1986.

Now he’s in his seventies, and knows what he’s about, dogged in his pursuit of meaning in a life that (as is usual) happens to him while he is making other plans. (“Why do we not do things?” Frank wonders. “It is a far richer question than why we do.”) Here is a master at work. And I don’t mean Ford (who needs no whoop-hooooorahs from me); I mean Frank.

This is the adventure of a man desperately trying to make life as least like an adventure as possible for his balding, warty, forty-seven year-old son, an oddball for whom “connections between the heartfelt and the preposterous are his yin and yang”, and dying, as we watch, from a disease people regularly kill themselves to avoid. “Short of joining the Zion Lutherans, setting out nasturtiums and registering to vote,” Frank explains, “I’ve done all I can to solidify an idea of normal life for us, so we’re not constantly peeking around the sides of things to confront life’s shuddering advances.”

But is Frank’s everything enough? Frank knows he’s weak, and distractible and, who’s to say? a little bit empty inside. His son certainly says so — but then, his son only ever talks in one-liners (absurd, barbed, both); they’re his strategy for eluding experience. His daughter Clarissa knows so — but that’s her trouble: she thinks that people are knowable, and opinions suffice. She’s the sort of reader who would give up on Be Mine, complaining that there’s no plot.

So what happens? What gives?

Frank and his son spend chapters preparing to visit the Mayo Clinic in Rochester where Paul, a volunteer and “medical pioneer”, is being “celebrated” at the conclusion of a research study. At the last minute, half-way down “death’s bright companionway” and twenty feet from the door, father and son peel away and go instead to pick up their camper van.

Half way through the book, they’re ready to leave Rochester.

There’s a chapter in a Hilton Garden.

There’s a chapter in The World’s Only Corn Palace (I’ve been there; Ford nails it).

There’s a chapter about choosing a near-derelict motel over the Fawning Buffalo Casino, Golf and Deluxe Convention Hotel near Wall, South Dakota.

And it’s here, just a few pages before Rushmore, that Ford tips his hand.

“‘I know we have to do what we have to do,’” says Patti, the motel owner; like most strangers met along this road, she’s sympathetic enough. “‘But we don’t always have to do the precise right thing for the precise right reasons all the time. Okay, Frank?’ She pyramids her dark eyebrows as if she’s imparting sacred truths anybody’d be crazy to ignore.”

And Frank, his shoulder screaming from the effort of lifting his crippled son into their van, takes one look down that primrose path and decides he’s sure as hell not going there: “And of course she’s wrong! Dead wrong! Should I not care that I’m doing what I’m doing and why? Or how I’m doing it? With my only son? Is that ever true?”

Good stories have cracking plots about heroes who must face impossible odds and make great sacrifices. Frank does this each time he orders breakfast. Frank holds himself together the way you and I hold ourselves together (or try to) — by snatching at straws in the maelstrom of everyday life (whatever the hell that is).

And Be Mine is Frank — a 20-foot model of the Titanic assembled from matchsticks.

Or picture this (since that matchstick Titanic might inspire admiration, but never love): picture a novel that feels truer to experience than your own experience.

Or this (since we’re none of us getting any younger, and this is likely Frank’s swan-song): the chance to spend a last few hours with a friend.