In 1817, in a book entitled Experiments on Life and its Basic Forces, the German natural philosopher Carl August Weinhold explained how he had removed the brain from a living kitten, and then inserted a mixture of zinc and silver into the empty skull. The animal “raised its head, opened its eyes, looked straight ahead with a glazed expression, tried to creep, collapsed several times, got up again, with obvious effort, hobbled about, and then fell down exhausted.”

The following year, Mary Shelley’s Frankenstein captivated a public not at all startled by its themes, but hungry for horripilating thrills and avid for the author’s take on arguably the most pressing scientific issue of the day. What was the nature of this strange zone that had opened up between the worlds of the living and the dead?

Three developments had muddied this once obvious and clear divide: in revolutionary France, the flickers of life exhibited by freshly guillotined heads; in Edinburgh, the black market in fresh (and therefore dissectable) corpses; and on the banks of busy British rivers, attempts (encouraged by the Royal Humane Society) to breathe life into the recently drowned.

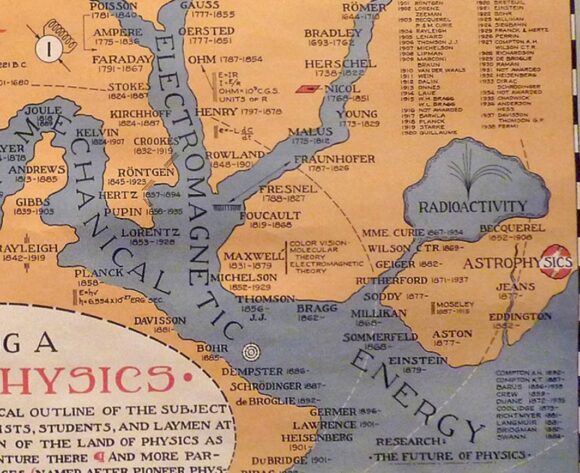

Ruston covers this familiar territory well, then goes much further, revealing Mary Shelley’s superb and iron grip on the scientific issues of her day. Frankenstein was written just as life’s material basis was emerging. Properties once considered unique to living things were turning out to be common to all matter, both living and unliving. Ideas about electricity offer a startling example.

For more than a decade, from 1780 to the early 1790s, it had seemed to researchers that animal life was driven by a newly discovered life source, dubbed ‘animal electricity’. This was a notion cooked up by the Bologna-born physician Luigi Galvani to explain a discovery he had made in 1780 with his wife Lucia. They had found that the muscles of dead frogs’ legs twitch when struck by an electrical spark. Galvani concluded that living animals possessed their own kind of electricity. The distinction between ‘animal electricity’ and metallic electricity didn’t hold for long, however. By placing discs of different metals on his tongue, and feeling the jolt, Volta showed that electricity flows between two metals through biological tissue.

Galvani’s nephew, Giovanni Aldini, took these experiments further in spectacular, theatrical events in which corpses of hanged murderers attempted to stand or sit up, opened their eyes, clenched their fists, raised their arms and beat their hands violently against the table.

As Ruston points out, Frankenstein’s anguished description of the moment his Creature awakes “sounds very like the description of Aldini’s attempts to resuscitate 26-year-old George Forster”, hanged for the murder of his wife and child in January 1803.

Frankenstein cleverly clouds the issue of exactly what form of electricity animates the creature’s corpse. Indeed, the book (unlike the films) is much more interested in the Creature’s chemical composition than in its animation by a spark.

There are, Ruston shows, many echoes of Humphry Davy’s 1802 Course of Chemistry in Frankenstein. It’s not for nothing that Frankenstein’s tutor Professor Waldman tells him that chemists “have acquired new and almost unlimited powers”.

An even more intriguing contemporary development was the ongoing debate between the surgeon John Abernethy and his student William Lawrence in the Royal College of Surgeons. Abernethy claimed that electricity was the “vital principle” underpinning the behaviour of organic matter. Nonsense, said Lawrence, who saw in living things a principle of organisation. Lawrence was an early materialist, and his patent atheism horrified many. The Shelleys were friendly with Lawrence, and helped him weather the scandal engulfing him.

The Science of Life and Death is both an excellent introduction and a serious contribution to understanding Frankenstein. Through Ruston’s eyes, we see how the first science fiction novel captured the imagination of its public.