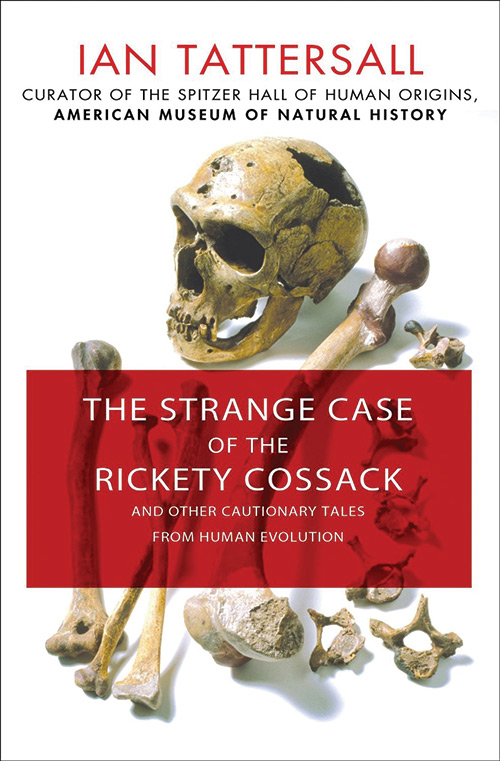

Sandi Mann’s The Upside of Downtime and Felt Time: The psychology of how we perceive time by Marc Wittmann reviewed for New Scientist, 13 April 2016.

VISITORS to New York’s Museum of Modern Art in 2010 got to meet time, face-to-face. For her show The Artist is Present, Marina Abramovic sat, motionless, for 7.5 hours at a stretch while visitors wandered past her.

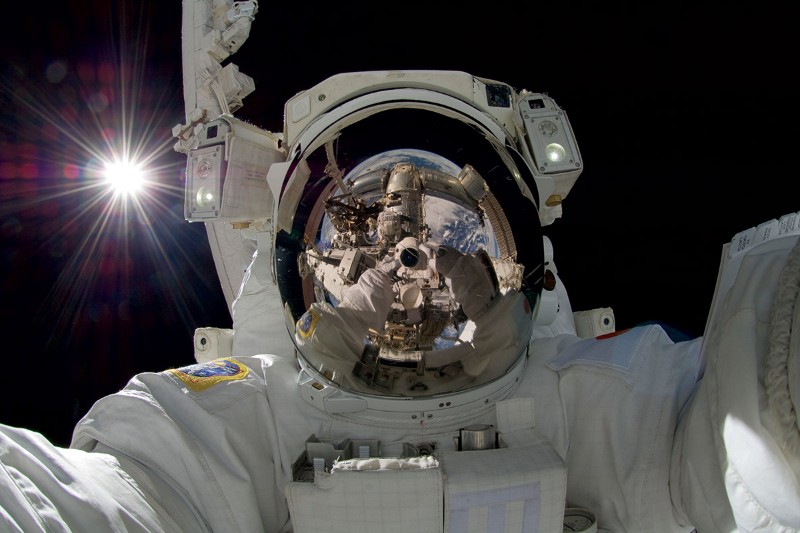

Unlike all the other art on show, she hadn’t “dropped out” of time: this was no cold, unbreathing sculpture. Neither was she time’s plaything, as she surely would have been had some task engaged her. Instead, Marc Wittmann, a psychologist based in Freiburg, Germany, reckons that Abramovic became time.

Wittmann’s book Felt Time explains how we experience time, posit it and remember it, all in the same moment. We access the future and the past through the 3-second chink that constitutes our experience of the present. Beyond this interval, metronome beats lose their rhythm and words fall apart in the ear.

As unhurried and efficient as an ophthalmologist arriving at a prescription by placing different lenses before the eye, Wittmann reveals, chapter by chapter, how our view through that 3-second chink is shaped by anxiety, age, boredom, appetite and feeling.

Unfortunately, his approach smacks of the textbook, and his attempt at a “new solution to the mind-body problem” is a mess. However, his literary allusions – from Thomas Mann’s study of habituation in The Magic Mountain to Sten Nadolny’s evocation of the present moment in The Discovery of Slowness – offer real insight. Indeed, they are an education in themselves for anyone with an Amazon “buy” button to hand.

As we read Felt Time, do we gain most by mulling Wittmann’s words, even if some allusions are unfamiliar? Or are we better off chasing down his references on the internet? Which is the more interesting option? Or rather: which is “less boring”?

Sandi Mann’s The Upside of Downtime is also about time, inasmuch as it is about boredom.

Once we delighted in devices that put all knowledge and culture into our pockets. But our means of obtaining stimulation have become so routine that they have themselves become a source of boredom. By removing the tedium of waiting, says psychologist Mann, we have turned ourselves into sensation junkies. It’s hard for us to pay attention to a task when more exciting stimuli are on offer, and being exposed to even subtle distractions can make us feel more bored.

Sadly, Mann’s book demonstrates the point all too well. It is a design horror: a mess of boxed-out paragraphs and bullet-pointed lists. Each is entertaining in itself, yet together they render Mann’s central argument less and less engaging, for exactly the reasons she has identified. Reading her is like watching a magician take a bullet to the head while “performing” Russian roulette.

In the end Mann can’t decide whether boredom is a good or bad thing, while Wittmann’s more organised approach gives him the confidence he needs to walk off a cliff as he tries to use the brain alone to account for consciousness. But despite the flaws, Wittmann is insightful and Mann is engaging, and, praise be, there’s always next time.